IMAGINE-decoding-challenge

Predict which words participants were hearing, based upon brain activity recordings of visually seeing these items?

How well do classifiers trained on visual activity actually transfer to non-visual reactivation?

#Decoding studies often rely on training in one (visual) condition and applying it to another (e.g. rest-reactivation). However: How well does this work? Show us what makes it work and win up to 1000$!

24.10.2025 06:55

👍 32

🔁 14

💬 3

📌 3

preprint alert 🚨

1/ Can we accurately detect sequential replay in humans using Temporally Delayed Linear Modelling (#TDLM)? In our recent study, we could not find any replay and decided to dig deeper by running a hybrid simulation with surprising results. Link to preprint & details below 👇

16.06.2025 07:22

👍 56

🔁 27

💬 2

📌 2

🚀 Join Us for the Next Mannheim Open Science Meetup! 🚀

🔬 Topic: ARIADNE – A Scientific Navigator to Find Your Way Through the Research Resource Labyrinth

🎙 Speaker: Çağatay Gürsoy, Central Institute of Mental Health

📅 Date & Time: April 30, 2025, 3:00 PM

📍 Location: Register online lnkd.in/exErgJfy

20.03.2025 12:04

👍 13

🔁 5

💬 2

📌 2

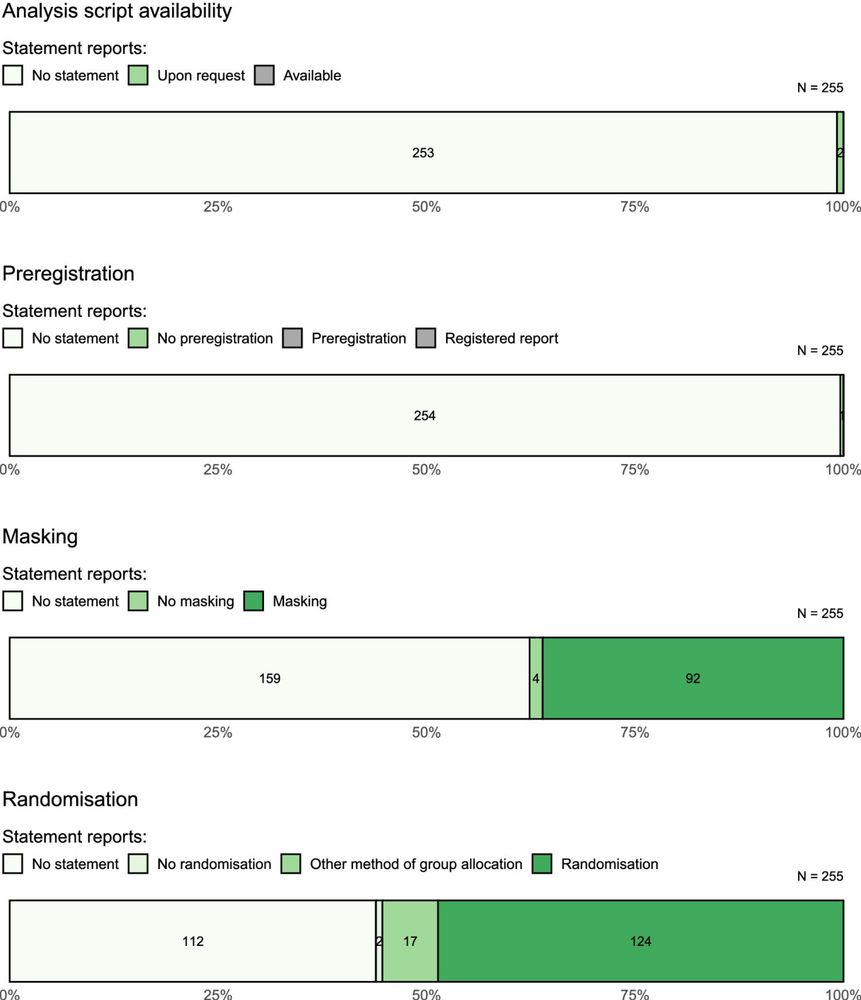

Also, credit where credit is due: Figure 2 is based on code by the amazing @tomhardwicke.bsky.social and this paper doi.org/10.1098/rsos...! Thanks for sharing your code openly, Tom!

08.04.2025 11:35

👍 1

🔁 0

💬 1

📌 0

Proud to share this new meta-science article—our analysis of 255 preclinical opioid addiction studies highlights a pressing need for better transparency and reproducibility. Big thanks to Justine Blackwell and @alexh.bsky.social – it was an honor working with you on this project! :)

08.04.2025 08:54

👍 14

🔁 6

💬 1

📌 0

I can highly recommend this opportunity for postdocs in cognitive neuroscience 👇

04.02.2025 09:27

👍 1

🔁 1

💬 0

📌 0

OSF

New preprint on "Attitudes Toward Open Science Practices"!

We asked 596 German psychologists about their worries and hopes towards open science practices!

ECRs reported more worries AND more fears, but the more you use OS the less worries and the more hopes you have.

osf.io/preprints/ps...

28.01.2025 09:50

👍 21

🔁 6

💬 1

📌 0

I made one for stats papers

18.11.2024 04:02

👍 545

🔁 149

💬 15

📌 28

@juliabeitner.bsky.social presents LIFOS, a platform where students can learn about and train #OpenScience practices in a safe practice environment. 😊✨ #dgps2024

18.09.2024 10:42

👍 4

🔁 1

💬 0

📌 1

If you are interested in our holistic Open Science training platform, dedicated to students, you can find the slides from my talk here 👇😊

osf.io/ug6mk

18.09.2024 13:43

👍 2

🔁 0

💬 0

📌 0

🚨 Deadline extended: We’ve set the #ManyBeds registration deadline to August 31st! 🗓️ If you're interested in contributing to sleep and memory research, there’s still time to get involved. 🧠💤

Join us and help advance this important work.

Sign up now! 👇

09.08.2024 12:28

👍 0

🔁 0

💬 0

📌 0

OSF

New findings of disappointing rates of methodological rigor and transparency! 😐

We coded all 255 papers we found on animal models of opioid addiction published between 2019 and 2023.

Rates of bias minimization practices and sample size calculations were.. unsatisfactory.

osf.io/preprints/ps...

31.07.2024 22:19

👍 16

🔁 4

💬 1

📌 0

Yes, certainly! For the replication part, the hypotheses mainly concern behavioral findings and only one hypothesis is about the sleep EEG. We will upload the hypotheses soon. You can also team up with another person with EEG experience if you like. Let me know if you have any more questions :)

25.07.2024 13:45

👍 1

🔁 0

💬 0

📌 0

Thank you Juli! 🙏

25.07.2024 10:31

👍 0

🔁 0

💬 0

📌 0

Flyer of the ManyBeds project. The left side shows the logo in white on a colorful gradient background. On the right is a brief description of the study and the link to the project’s website.

Interested in sleep and memory research? 💤🧠 Then join the #ManyBeds project! 🛏️ A multi-lab, many-analysts, replication study led by @gordonfeld.bsky.social and me.

We seek contributors for data collection and analysis.

Sign up now! ✨

Learn more here: cimh-clinical-psychology.github.io/ManyBeds/

24.07.2024 14:17

👍 15

🔁 12

💬 1

📌 2

Congratulations! 🎉🥳

28.05.2024 09:53

👍 1

🔁 0

💬 0

📌 0

RETRACTED: A Perception Study for Unit Charts in the Context of Large-Magnitude Data Representation

Unit charts are a common type of chart for visualizing scientific data. A unit chart is a chart used to communicate quantities of things by making the number of symbols on the chart proportional to th...

An article about data visualization was retracted 1.5 years after I pointed out errors.

The notice says that "concerns were raised".

I spend dozens of hours contacting authors and editors, reproducing analyses, and following up on ignored emails.

But I'm not mentioned in the retraction notice.

24.05.2024 11:24

👍 42

🔁 14

💬 3

📌 2

Express interest in repliCATS workshops

The repliCATS project will run a series of workshops in 2024 as part of the SMART: Preprints project in collaboration with the Center for Open Science.

The goal of repliCATS (Collaborative Assessment...

Hey everyone, we have 5 spots left for our in-person repliCATS workshop in Nairobi! If you are going to SIPS2024 @improvingpsych.bsky.social we will be running a workshop on day 2, with US$250 travel grant for all participants. Express interest: forms.gle/WcbH6Ufizobb... [re-skeets welcome].

22.05.2024 01:09

👍 13

🔁 8

💬 0

📌 2

Zoom on section b) of a figure displaying the experimental procedure. Julia can be seen modeling a participant in VR and in the desktop screen experiment.

Lastly, I’m really grateful for my colleagues of the SceneGrammarLab who made this study happen and allowed me to include photographs of myself in a figure. Look mom, I’m a model in a scientific paper! 💁♀️ 4/4

21.05.2024 11:47

👍 0

🔁 0

💬 0

📌 0

We also compared the procedure between both VR and 3D desktop screen settings and found only slight differences. This indicates that the screen setup could elicit comparable behavior as in VR. More research is needed to dive into the nuances and underlying processes 3/4

21.05.2024 11:45

👍 2

🔁 0

💬 1

📌 0

In line with our expectations, limited visual input did not impact or even benefited incidental memory of encountered objects. Moreover, spatial memory of scenes only seen with a flashlight was overall just as good as memory of illuminated scenes! 2/4

21.05.2024 11:45

👍 1

🔁 0

💬 1

📌 0

Photo of the Sydney Opera House and Harbour Bridge, framed by mangrove branches.

Again, we have four(!) new positions here in psychology at the University of Sydney.

Like N. American tenure-track, but easier to get "tenure" (become permanent). Let me know of any questions! usyd.wd3.myworkdayjobs.com/USYD_EXTERNA... photo: Alex Holcombe

17.03.2024 23:52

👍 29

🔁 26

💬 1

📌 0

Thank you Alex! Means a lot :)

18.03.2024 09:20

👍 0

🔁 0

💬 0

📌 0

Thank you Lisa! ☺️

17.03.2024 08:57

👍 0

🔁 0

💬 0

📌 0

As a psychologist I don’t know about sociology, but it certainly is a question of interest in psychology!

16.03.2024 11:46

👍 1

🔁 0

💬 1

📌 0

The title says “Visual search goes real: Transitioning from the experimentalist’s laboratory to more naturalistic settings”. I studied visual search in VR and evaluate the field as well as VR in terms of ecological validity :)

16.03.2024 11:37

👍 0

🔁 0

💬 1

📌 0